Guesswork Computing Featured Signal of Change: SoC857 March 2016

The amount of data in use by almost all industries and disciplines is increasing rapidly, and tools that analyze those data are improving. Industries and activities that once required guesswork and approximations are now far more precise. To try to identify people likely to buy a product, marketers once used complex consumer-segmentation systems and market research. Today, Google (Alphabet; Mountain View, California) simply identifies likely buyers from the search terms—for example, cheap washing machines—that they use. Insurers once reduced risk by taking on more customers, expanding the pool of insureds to balance risk; today, many insurers reduce risk by pinpointing risks and being more selective about the people they insure.

Many big-data applications are only engaging in guesswork and approximations—albeit kinds of guesswork that are very different from those that rely on human reasoning.

Despite the general truth that information reduces uncertainty, users are sometimes overconfident in their algorithms and complex mathematical models, which leads to a false sense of certainty (arguably, the finance industry had too much confidence in its risk models leading up to the financial crisis of 2008). Many big-data applications are only engaging in guesswork and approximations—albeit kinds of guesswork that are very different from those that rely on human reasoning. For example, a growing number of applications use proxy data to model a subject of interest. These applications may be able to predict phenomena with some degree of accuracy, but they do so without providing information about the phenomena themselves.

Various start-ups are developing apps that analyze the behavioral data smartphones collect to assess the creditworthiness of users. App-based lending companies such as Branch International (San Francisco, California, and Nairobi, Kenya), Greenshoe Capital (Los Angeles, California), and InVenture (Santa Monica, California) offer loans to customers who have little or no conventional credit history. Instead of relying on conventional credit scores, such companies use risk models based on smartphone-usage data, including the times users make calls, the number of text messages users send and receive, and how quickly users drain a smartphone's battery.

SoC687 — Analysis via Social and Search-Engine Data points out that social data inform applications as diverse as financial trading, flu analysis, national-security operations, public-opinion research, and cinema-box-office forecasting. Researchers at the University of Pennsylvania (Philadelphia, Pennsylvania) recently developed a machine-learning model that can estimate Twitter (Twitter; San Francisco, California) users' income brackets on the basis of the information the users' tweets contain.

Internet of Things applications often use indirect data to model phenomena. Sleep Cycle from app developer Northcube (Göteborg, Sweden) uses data from a smartphone's microphone and accelerometer to identify the ideal time to wake users on the basis of their sleep cycle. Researchers from the University of Ouagadougou (Ouagadougou, Burkina Faso) found that the signal interference falling rain creates among cell-phone towers can provide rainfall measures. Researchers at the Massachusetts Institute of Technology's (Cambridge, Massachusetts) Computer Science and Artificial Intelligence Laboratory have developed a technique that uses Wi-Fi signals to monitor heart rate.

Modeling phenomena with indirect data increases the value of data sets (because one data set has multiple uses) and creates opportunities that would otherwise be impossible (for example, establishing credit scores for people who have no credit history). But the use of indirect data also carries risks. The most obvious risk is that systems that rely on indirect data will be flawed. In 2014, researchers at Northeastern University (Boston, Massachusetts) discovered that the accuracy of Google's Google Flu Trends—a big-data system that uses internet search terms to try to predict flu activity—had declined over a period of years and that its algorithms were not able to recalibrate themselves. A hedge fund that investors from Derwent Capital Markets (London, England) set up in 2011 to trade on the basis of Twitter data lasted only a month. Start-ups' ability to assess the creditworthiness of consumers accurately using only smartphone data is unproven.

Because machine-learning software may focus on features in data sets that humans would typically ignore, even systems that use direct data can produce unexpected results. During the past year, several research teams have demonstrated ways to trick deep-learning networks (a kind of machine-learning system) into misclassifying images. Various research teams are removing bugs in these image recognizers, but the errors highlight the fact that the way image classifiers recognize images is quite different from the way humans recognize images.

Figuring out how many errors are occurring in machine-learning systems is often difficult because many such systems are black boxes—that is, their exact chain of reasoning is incomprehensible to humans. For example, when automated trading algorithms have caused problems (including the so-called 2010 Flash Crash—a massive stock market crash that lasted less than 40 minutes), programmers have struggled to explain their algorithms' behavior.

Whether they are using direct data or indirect data, big-data systems tend to focus on correlations within data rather than on causal relationships. In 2008, Chris Anderson, then editor in chief of Wired, wrote "The End of Theory: The Data Deluge Makes the Scientific Method Obsolete," arguing that organizations and scientists no longer need hypotheses and speculation. However, systems that only correlate are at risk of making undetectable and unpredictable errors—particularly when they rely on indirect data.

In practice, the outputs of most big-data systems are maybes, not definitive results. Such systems use numerical thresholds to make probabilistic judgments instead of following logical rules to arrive at certain answers like conventional software does. Problems with data and imperfect statistical models sometimes cause probabilistic methods to produce mistakes. Such imperfections do not mean that big-data systems are useless. Deep-learning systems that break performance benchmarks for visual-recognition tasks are becoming almost commonplace, and their capabilities far exceed those of rule-based machine-vision systems.

Users of big-data systems need to understand the capabilities and limitations of their algorithms and be clear about the data they are working with. These requirements should not discourage big-data projects but sharpen their focus.

The Development of this Signal of Change

Data Points

- SC-2016-02-03-040

Various start-ups are developing apps that analyze the behavioral data smartphones collect to assess the creditworthiness of users. - SC-2016-02-03-054

Researchers at the University of Pennsylvania recently developed a machine-learning model that can estimate Twitter users' income brackets on the basis of the information the users' tweets contain. - SC-2016-02-03-004

Sleep Cycle from app developer Northcube uses data from a smartphone's microphone and accelerometer to identify the ideal time to wake users on the basis of their sleep cycle.

Implications

Guesswork Computing

A growing number of applications use proxy data to model a subject of interest.

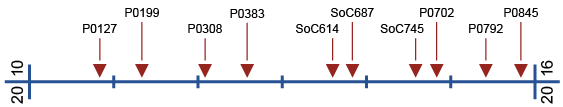

Previous Alerts

- P0127 — Insights through Network Analytics (November 2010)

New understanding of complex networks enables research to mine a wide variety of data streams emerging from these networks. - P0199 — Data-Mining Science (May 2011)

Advances in data mining are establishing a new science paradigm. - P0308 — Algorithms: Architects of the Invisible World (February 2012)

Algorithms capture natural, social, and economic phenomena. But ever more complex algorithms also distance users from intuitive understanding. - P0383 — Twitter: The Collective Consciousness? (August 2012)

Increasingly sophisticated algorithms mine social media for data, offering insights into human behavioral patterns and cultural differences. - SoC614 — Big Science: Correlating the World (October 2012)

As the tools to collect and analyze large sets of data become more powerful, new relations and associations between variables will emerge. - SoC687 — Analysis via Social and Search-Engine Data (November 2013)

An increasing range of applications use search-engine and social data. - SoC745 — Mapping Urban Dynamics (August 2014)

Understanding the dynamics of urban environments to improve the effectiveness and efficiency of infrastructures is one major area of interest. - P0702 — Cultural Cartography (November 2014)

New approaches map people's enjoyment options, culture, and heritage. - P0792 — Eavesdropping by Proxy (June 2015)

Novel methods of extracting data enable the circumvention of traditional security measures. - P0845 — Cell-Phone Technology and Data Analysis (November 2015)

Cell-phone technology and infrastructure have unexpected uses in data analysis.