AI-Enabled Sensing Featured Signal of Change: SoC1086 May 2019

Artificial intelligence is transforming the world of sensing by making existing sensors dramatically more effective and opening up entirely new applications for them. AI-enabled sensing has already become commonplace in smartphones, newer models of which rely on various forms of AI to enhance the images that cameras' sensors capture, to transform speech into commands or search queries, to help infer users' activities from motion-sensor data, and to perform numerous other tasks. Many tens of millions of households worldwide now have one or more smart speakers, which are essentially audio sensors that connect to cloud-based AI. But these ubiquitous examples of the proliferation of AI-enabled sensing represent only a subset of what applications might eventually be possible as developers increasingly merge AI with sensing in multiple ways.

AI-enabled sensing might provide a viable route to overcoming long-standing challenges.

One can subdivide the emerging world of AI-enabled sensing into two broad categories: instances in which sensors act as "portals" to an AI system and instances in which an AI system tightly integrates with sensors. The former category includes smart speakers, smart security cameras, and a broadening range of other devices in which what is an otherwise conventional sensor connects with a typically cloud-based AI system. Portal-type AI-enabled sensors offer developers considerable freedom to invent and deploy new sensing features without needing to change underlying hardware. For example, Audio Analytic (Cambridge, England) is developing AI that could enable the audio sensors in existing smart speakers to detect and respond to audio cues other than speech. If the sensors detect the sound of glass breaking, the AI could alert a user to a likely home break-in. Or if the sensors detect sounds that indicate that an elderly household member is in distress, the AI could summon help automatically. Ambient.ai (Palo Alto, California) has been developing a system that uses AI to make sense of what a video camera is seeing to deliver user-interface features, which is a variant of the kind of AI-based video analysis that many home security cameras perform already and similar to the kind of AI that helps enable automated inventory management, loss prevention, and walk-through checkout at smart-retail stores from Amazon.com (Seattle, Washington), JD.com (Beijing, China), and others. Athelas (Mountain View, California) offers an AI-based portable diagnostics device for blood testing that is essentially a video microscope that connects to a cloud-based AI system. The device currently performs only one kind of test (blood counts) but could expand to perform many other kinds of tests as Athelas continues to develop its software.

Other AI-enabled sensing systems tightly integrate AI with sensors to enable new kinds of sensing or to enhance sensor performance. Many current high-end smartphones now contain AI chips that, among other functions, work with smartphones' image sensors to produce images that are clearer, less noisy, and more accurate than are images possible without AI enhancement. University research groups from California and Germany have demonstrated how AI can find use in enhancing the resolution, phase contrast, and other performance features of optical microscopes; such techniques could one day lead to advanced optical microscopes that contain special AI chips for enhancing images in real time. The Livio AI hearing aid by Starkey Hearing Technologies (Eden Prairie, Minnesota) incorporates special hardware that reportedly uses AI to enhance speech clarity and enables language translation and a variety of other features. An experimental sensor from IBM (Armonk, New York) that attaches to a user's fingernail employs AI to detect signs of the onset of Parkinson's disease. HyperSurfaces (London, England) uses vibration sensors in combination with AI chips to transform various surfaces into user interfaces. And researchers from Carnegie Mellon University (Pittsburgh, Pennsylvania) integrated AI chips with data from a few simple sensors to yield what they refer to as "synthetic sensors" that can detect a wide range of phenomena that would typically require many varieties of specialized sensors to detect.

The ability of AI-enabled sensors to transcend physical limitations or to "synthesize" specialized sensors from combinations of basic sensors suggests the possibility that AI-enabled sensing might provide a viable route to overcoming long-standing challenges. For example, researchers have been exploring a wide variety of techniques for monitoring blood glucose noninvasively and without the need for consumable reagents, but decades of investigation have yielded no success. Perhaps AI can enable diverse sensing methods to combine in novel ways, resulting in the creation of a noninvasive continuous blood-glucose sensor that could integrate into a low-cost wearable device.

Many examples of AI-enabled sensing systems that integrate onboard AI processors are refinements of earlier systems in which sensors acted as portals for cloud-based AI systems. For example, cloud-based systems once performed the types of image and speech processing that many smartphones now use special AI-accelerator chips to perform. Tight integration of sensing and AI processing confers advantages—including power-consumption and data-use reductions—that are important in portable use cases. But the main advantage of such integration is latency reduction, because the sensing system does not need to wait for sensor data to upload to a distant cloud server and results to travel back to the sensor before the results are available. Latency reductions from local processing can yield significant user-experience improvements for devices such as smartphones and building-automation controllers. For applications with robots, industrial machinery, and autonomous vehicles, low-latency sensor performance can be critical to safe and effective equipment operation. Moreover, in safety-critical applications, having onboard AI eliminates the possibility that network-availability problems could render sensors inoperable.

Developments in cellular-network technology could eventually enable some types of AI-enabled sensors to use off-board processing while having latency and reliability performance equal to that of sensors that use onboard processing. But even if AI-enabled sensors one day gain the ability to use cloud-based AI processing without latency and reliability disadvantages, that such sensors would inevitably trend away from tight integration with onboard AI chips is unclear. In addition to having the ability to deploy new features easily, AI-enabled sensing systems that use cloud processing continually aggregate vast amounts of data that can help train and improve AI systems dynamically, thereby improving sensor performance as time passes. But sensors that use onboard AI processing can still gather and upload such data and benefit from resultant improvements in AI algorithms. The systems can store data and upload them en masse at a convenient time and download new AI software periodically as updates become available. Autonomous vehicles from Tesla (Palo Alto, California) provide prominent examples of this kind of fusion of local and cloud-based AI-enabled sensing.

The Development of this Signal of Change

Data Points

- SC-2018-04-04-057

AI helps enable automated inventory management, loss prevention, and walk-through checkout at smart-retail stores from Amazon.com. - SC-2019-06-05-096

The Livio AI hearing aid by Starkey Hearing Technologies incorporates special hardware that reportedly uses AI to enhance speech clarity and enables language translation and a variety of other features. - SC-2019-02-06-035

HyperSurfaces uses vibration sensors in combination with AI chips to transform various surfaces into user interfaces.

Implications

AI-Enabled Sensing

Artificial intelligence is making existing sensors dramatically more effective and opening up entirely new applications for them.

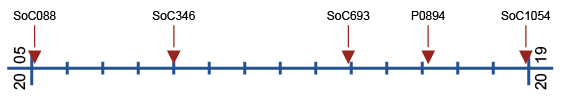

Previous Alerts

- SoC088 — Sensor Synergies (February 2005)

Most sensor applications currently focus on one application, but as sensors proliferate, designers are realizing that such focused applications of sensor data are costly and inefficient. - SoC346 — Advanced Sensors as Application Enablers (January 2009)

Companies are increasingly able to integrate real-time—or close-to-real-time—sensor data with existing applications and services, providing a quantum leap in capabilities for business and scientific endeavors. - SoC693 — Sensor-Focused End-Consumer Applications (December 2013)

Sensors and sensor arrays are taking the spotlight and becoming the primary reason for a consumer's purchase of a device. - P0894 — The Art of Packaging Sensors (March 2016)

Sensors are enablers for applications, and companies are increasingly using sensor packages to create useful new products. - SoC1054 — AI on Chips (December 2018)

Unprecedented access to artificial-intelligence technology is enabling new powerful systems and software for both businesses and consumers.